In today’s data-driven world, businesses rely heavily on data to make informed decisions, gain competitive advantages, and drive growth. To harness the full potential of data, organizations often require a robust data warehousing solution. In this guide, you’ll learn a 7-step framework to design and implement a Data Warehouse using Apache Stack (Spark, Hadoop, Hive), including architecture design, ETL workflows, and performance best practices.

Apache Stack is a concept commonly used to refer to a collection of open-source software and tools developed and maintained by the Apache Software Foundation (ASF). These tools are commonly used to build and manage web applications, network services, and online data systems. Here are some important pros of Apache Stack

It cannot be denied that Apache offers us numerous advantages. However, there are some small constraints that I want to highlight in this article for a two-sided perspective

You may enjoy: Unlocking Business Insights with Cloud-Based Data Warehousing

Building a data warehouse with the Apache Stack is a strategic move that comes with a multitude of benefits. This open-source distributed computing platform has gained immense popularity in recent years, largely due to its versatility, scalability, and robustness. Let’s delve deeper into the advantages of using the Apache Stack for your data warehousing needs

The Apache Stack’s open-source nature is one of its most notable advantages. This means you may tap into the collective wisdom of a large community of developers and users who contribute to and support the ecosystem. Open-source solutions are typically less expensive and more flexible than proprietary ones.

Apache Spark, a key component of the Apache Stack, is well-known for its speed and capacity to handle large amounts of data. It provides a complete suite of data processing, machine learning, and graph analytics frameworks and APIs. Spark’s prominence in the data science and big data communities provides plenty of resources and knowledge, making it a top choice for enterprises looking for sophisticated analytics capabilities.

Another essential member in the stack, Apache Hadoop, specializes in data extraction from huge and complicated datasets. It stores data across a cluster of machines using a distributed file system (HDFS) and then processes and analyzes it using MapReduce. As a result, it is an excellent solution for enterprises dealing with large amounts of data, such as clickstream data, logs, and sensor data, a leading choice for businesses looking for advanced analytics capabilities.

Hive is a data warehousing and SQL-like query language system that operates on top of Hadoop. It provides a familiar interface for data analysts and SQL developers, making it easier for them to work with big data. Hive optimizes queries and translates them into MapReduce jobs, delivering impressive performance improvements over raw MapReduce. Moreover, Hive is known for its efficiency and reliability, ensuring consistent and accurate results in data warehousing operations.

Learn more: Big Data & Analytics Consulting Guide for Enterprises

🔹Discovery of Business Objectives

The journey of building a data warehouse begins with understanding your business objectives. What are your tactical and strategic goals? Knowing these will guide your data warehousing efforts.

🔹Identification and Prioritization of Needs

Identify and prioritize the specific requirements from various projects within your organization. These needs will help define the scope of your data warehouse.

🔹Preliminary Data Source Analysis

Conduct a comprehensive analysis of your data sources. To establish how to manage data, you must first understand its structures, volumes, sensitivities, and other characteristics to determine how to handle them.

🔹Outlining Scope & Security Requirements

Clearly define the scope of your data warehouse and establish security requirements. Data security is paramount in today’s regulatory environment, and outlining these requirements early is essential.

🔹Defining the Desired Solution

Once your goals are clear, define the desired data warehouse solution. Consider factors like data accessibility, performance, and scalability.

🔹Choosing the Optimal Deployment Option

Decide whether you want to deploy on-premises, in the cloud such as AWS, Azure, or in a hybrid environment. This choice will impact your architectural design.

🔹Optimal Architectural Design

Select the best architectural design based on your goals and deployment choice. Consider factors like data volume, complexity, and expected growth.

🔹Selecting Data Warehouse Technologies

Choose the appropriate Apache Stack technologies based on your needs, including the number and volume of data sources, data flow requirements, and data security considerations.

🔹Defining Project Scope and Timeline

Create a detailed project scope, timeline, and roadmap for your data warehouse development. This will help you manage expectations and resources effectively.

🔹Scheduling Activities

Schedule activities for designing, developing, and testing your data warehouse. Estimate the effort required for each phase.

🔹Detailed Data Source Analysis

Perform a detailed analysis of each data source, considering data types, volumes, sensitivity, update frequency, and relationships with other sources.

🔹Designing Data Policies

Create data cleansing and security policies to ensure data quality and protect sensitive information.

🔹Designing Data Models

Develop data models that define entities, attributes, and relationships. Map data objects into the data warehouse.

🔹ETL/ELT Processes

Design ETL/ELT processes for data integration and flow control. Ensure seamless data movement and transformation.

🔹Platform Customization

Customize your data warehouse platform according to your requirements.

🔹Data Security Configuration

Configure data security software to enforce access controls and encryption.

🔹ETL/ELT Development and Testing

Develop ETL (Extract – Transform – Load) / ELT (Extract – Load – Transform) pipelines and thoroughly test them to ensure data accuracy and reliability.

🔹Data Migration and Quality Assessment

Migrate data into the data warehouse and assess its quality. Identify and address any issues.

🔹Introducing to Users

Introduce the data warehouse to business users, ensuring they understand how to access and leverage the data.

🔹User Training

Conduct user training sessions and workshops to empower your team to make the most of the data warehouse.

🔹Performance Tuning

Monitor and optimize ETL/ELT performance to maintain data warehouse efficiency.

🔹Adjusting Performance and Availability

Make adjustments to ensure the data warehouse meets performance and availability expectations.

🔹User Support

Provide ongoing support to end users, helping them address any issues or queries.

Learn more: Streamlining Data Collection and Reporting with Secure IoT-based Systems

Data warehouses serve multiple critical functions in a business context, including facilitating strategic decision-making, aiding in budgeting and financial planning, supporting tactical decision-making, enabling performance management, handling IoT data, and serving as operational data repositories. When executed effectively, data warehousing can deliver significant value to a company.

Building a data warehouse for your organization requires a skilled team comprising a project manager, business analyst, data warehouse system analyst, solution architect, data engineer, QA engineer, and DevOps engineer. Instead of opting for an in-house development team, which may incur substantial costs, consider leveraging the expertise of ITC Group. We offer a seasoned team of developers experienced in data warehouse to develop custom software solutions at a reasonable price point.

A production-ready data warehouse built with Apache technologies typically follows a layered architecture:

Data is ingested from:

Tools:

Raw data is stored in HDFS using structured formats such as:

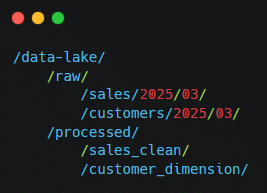

Example directory structure:

Partitioning strategy example:

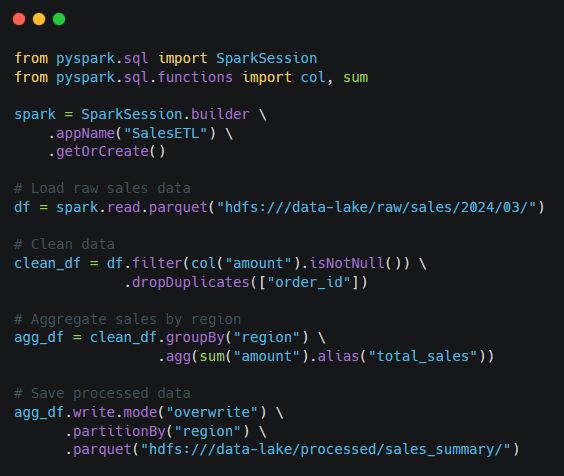

Spark transforms raw data into analytics-ready datasets.

Key transformations:

Processed datasets are exposed via:

Below is a simplified Spark ETL example in PySpark:

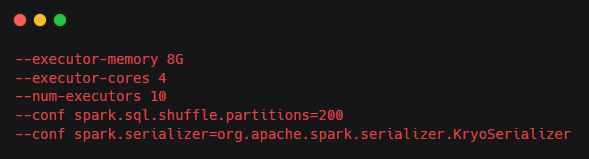

Production Spark jobs require tuning for performance.

Example configuration:

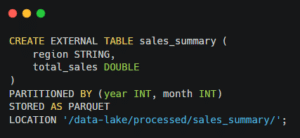

Example Hive external table using Parquet:

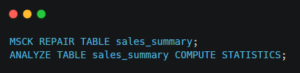

After creating table:

A Data Warehouse in the Apache ecosystem is a centralized storage system built using technologies such as Apache Hadoop, Apache Spark, and Apache Hive. It enables large-scale data storage, transformation, and analytical querying for business intelligence and reporting.

A typical Apache-based data warehouse architecture includes:

ELT is often preferred in modern big data architectures because distributed processing engines can handle transformations efficiently at scale.

To design a scalable data warehouse:

Performance optimization techniques include:

Yes, when combined with tools like Kafka and Spark Streaming, Apache Stack can support near real-time data ingestion and processing. However, architecture decisions depend on latency requirements and workload scale.

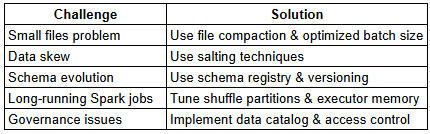

Common challenges include:

Stay ahead in a rapidly changing world with our monthly look at the critical challenges confronting businesses on a global scale, sent straight to your inbox.